The development and use of killer robots seem to be growing with technological advancements. But, a United Nations (UN) conference made some headway last week about limiting their use. This move has now prompted stepped-up calls to ban such weapons through a new treaty.

These killer robots come in different forms including combat robotic vehicles that can launch missiles operated remotely. In some cases, these robots decide on their own who and where to attack.

It might have appeared like an obscure UN conclave, but a meeting last week in Geneva, Switzerland was followed intentionally by experts in military strategy, artificial intelligence (AI), disarmament, and humanitarian law.

The reason for the interest in the meeting is killer robots. The robots include guns, drones, and bombs that decide independently, using their artificial brains, whether to attack and destroy. The experts believe that something must be done to regulate or ban these killer robots altogether.

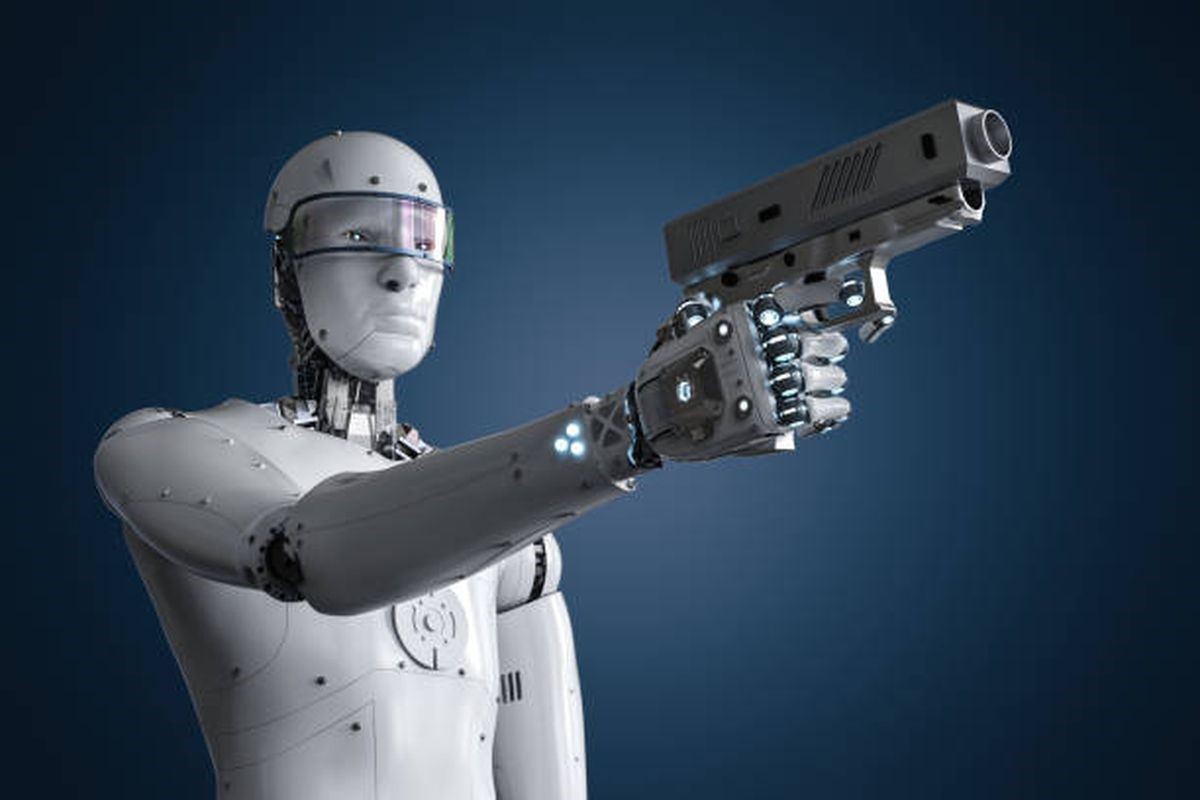

In the past, it was just a domain of science fiction films like “RoboCop,” killer robots and the “Terminator” series. At the time, they were known as Lethal Autonomous Weapons Systems. Since then, advanced weapons have been invented and tested speedily with little or no oversight in some cases. Some of the killer robots have already been used in real conflicts.

The massive evolution of these machines is believed to be a potentially seismic event in warfare. They are compared to the invention of gunpowder and nuclear bombs. In 2021, for the first time, most of the 125 nations that belong to an agreement known as the Convention on Certain Conventional Weapons (CCW) expressed their desire to limit the development and use of killer robots.

However, CCW was opposed by members that are busy creating these killer weapons, mainly Russia and the United States. The conference concluded on December 17 with just a small and vague statement about implementing potential measures that are acceptable to all. The Campaign to Stop Killer Robots, a disarmament group, insisted that the outcome of that conference fell “drastically short.”

The CCW, also known as the Inhumane Weapons Convention, is a network of rules that ban or limit weapons that are believed to cause unnecessary, indiscriminate, and unjustifiable suffering, including blinding lasers, incendiary explosives, and booby traps that do not tell between civilians and fighters. For now, this convention does not have any provisions for killer robots.

What Are Killer Robots?

Opinions about these weapons differ on the exact definition. However, they are majorly considered to be weapons that make independent decisions with minimal or no human involvement. Speedy improvements and enhancements in artificial intelligence, robotics, and image recognition are making these armaments possible.

Related: Facial Recognition Technology: Designers Are Fighting Back

The drones that the United States has used majorly in Iraq, Afghanistan, and other places are not considered to be robots since they are operated remotely by people. The people who operate these drones choose their targets and decide where to attack. Thus, they do not shoot indiscriminately.

To the war planners and strategists, these killer robots give the promise of keeping soldiers out of harm’s way and making quicker decisions than a human would. Hence, more battlefield responsibilities are given to autonomous systems like driverless tanks and pilotless drones that independently determine when and where to strike.

What Are The Objections?

Critics insist that it is morally distasteful to assign such lethal decision-making to machines, irrespective of their technological sophistication. How does such a machine manage to differentiate between an adult and a child, a fighter carrying a bazooka and a civilian with a broom, a hostile combatant ready to strike and a wounded or surrendering soldier?

The president of the International Committee of the Red Cross who is also a vocal opponent of killer robots, Peter Maurer, told the Geneva conference:

“Fundamentally, autonomous weapon systems raise ethical concerns for society about substituting human decisions about life and death with sensor, software and machine processes.”

Before the conference, Harvard Law School’s International Human Rights Clinic and Human Rights Watch strongly proposed several steps to be implemented to guarantee a legally binding agreement that stipulates human control for the killer robots at all times. These groups highlighted in a briefing paper to support recommendations:

“Robots lack the compassion, empathy, mercy, and judgment necessary to treat humans humanely, and they cannot understand the inherent worth of human life.”

Other opponents believe that these killer robots and other autonomous weapons might increase the risk of war instead of reducing it. Such weapons offer antagonists ways of inflicting a lot of harm while mitigating the risks to their soldiers during wars. In that context, New Zealand’s disarmament minister, Phil Twyford, stated:

“Mass-produced killer robots could lower the threshold for war by taking humans out of the kill chain and unleashing machines that could engage a human target without any human at the controls.”

Why Was The Geneva Conference Crucial?

Disarmament experts believed that the UN conference would be an ideal opportunity for governments and involved regulators to come up with means to regulate, if not ban, the use of various killer robots under the CCW convention.

It was the ultimate culmination of many years of discussions by experts that had been urged to determine the issues and potential strategies to mitigate threats that come with killer robots. But strangely, these experts could not even agree on the solutions to basic questions.

What The Opponents Of A New Treaty Say

Some countries like Russia say that decisions to limit killer robots need to be unanimous, which gives opponents a veto. On the other hand, the US says that the current international laws are adequate and banning prohibiting autonomous weapons technology would be inappropriate and premature.

Joshua Dorosin, the key United States delegate to this conference, championed a nonbinding ‘code of conduct’ for the use of killer robots. His idea was dismissed immediately as a delaying tactic by the disarmament proponents.

So far, the American military has invested massively in artificial intelligence, partnering with the largest defense contractors, like Boeing, Lockheed Martin, Northrop Grumman, and Raytheon. That work has featured projects that develop long-range missiles, which detect moving targets identified on radiofrequency.

Related: US military equipped with tiny spy drones

The US military is also developing swarm drones that can identify a specific target and attack it precisely. They are also developing automated missile-defense systems, as highlighted by research by opponents of these automated weapons systems. Some weapons like the U.S. Air Force Reaper drones that were used in Afghanistan in 2018 could be turned into autonomous lethal weapons and killer robots in the future.

The complex nature and different uses of artificial intelligence make it quite challenging to regulate the technology that land mines and nuclear weapons, according to Maaike Verbruggen.

Verbruggen works as an expert on emerging military security technology at the Centre for Security, Diplomacy and Strategy in Brussels. She believes that lack of transparency on what different nations are building has resulted in “fear and concern” among different military leaders that they have to keep up. Ms. Verbruggen, who is currently working toward a Ph.D. on this topic, said:

“It’s very hard to get a sense of what another country is doing. There is a lot of uncertainty and that drives military innovation.”

Another research fellow at the International Institute for Strategic Studies, Franz-Stefan Gady, believes that:

“The arms race for autonomous weapons systems is already underway and won’t be called off any time soon.”

Is There A Misunderstanding In Armed Forces About Killer Robots?

Yes, there is a misunderstanding and conflict about these machines. As the technology becomes more advanced, there has been a hesitance to use these autonomous weapons in real combat due to fears of costly mistakes. Mr. Gady stated:

“Can military commanders trust the judgment of autonomous weapon systems? Here the answer at the moment is clearly ‘no’ and will remain so for the near future.”

Discussions and debates about autonomous weapons, mostly killer robots, have overflowed into Silicon Valley. Google said in 2018 that it would not renew its contract with the Pentagon after thousands of its employees signed a letter that protested the firm’s work on a project using AI to analyze and interpret images that could be used to select drone targets.

Google also developed new ethical guidelines preventing the use of its technology for surveillance and weapons.

Related: Microsoft workers protest: ‘Cancel US Army HoloLens super-soldier contract’

Proponents of this technology believe that the US is not investing adequately to compete with rivals like China and Russia.

In October 2021, Nicolas Chaillan, the ex-chief software officer for the Air Force, said that he resigned due to weak technological progress within the American military which did not excite him. He said that there is potential in artificial intelligence but the military was not exploring far enough. Chaillan said that policymakers are restricted by issues of ethics, while other nations press ahead rapidly.

Where Have Autonomous Weapons Been Utilized Previously?

Not many verified battlefield examples are available currently. Nonetheless, critics say that several incidents show the killer robots technology’s potentials. In March 2021, UN investigators said that a “lethal autonomous weapons system” had been utilized by government-backed forces in Libya to combat militia fighters.

A drone dubbed Kargu-2, manufactured by a Turkish defense contractor, tracked and successfully attacked the fighters while they fled a rocket attack, based on an official report. The attack left it unclear whether the drones were autonomous or controlled by any humans.

In the Nagorno-Karabakh war in 2020, Azerbaijan fought Armenia using attack drones and advanced missiles that loiter in the air until they identify the precise signal of an assigned target.

What Next?

Most of the disarmament proponents believe that the UN conference had complicated a resolve to push for a new treaty in the coming years, like those that ban cluster munitions and land mines.

Daan Kayser is an autonomous weapons expert working at PAX, a Netherlands-based peace advocacy group. He thinks that the UN conference’s failure to agree to debate and negotiate on killer robots was “a really plain signal that the C.C.W. isn’t up to the job.”

The chairman of the International Committee for Robot Arms Control, Noel Sharkey, insisted that the meeting had shown that a new treaty was important to increase C.C.W. deliberations. He mentioned:

“There was a sense of urgency in the room, if there’s no movement, we’re not prepared to stay on this treadmill.”