The Holaplex Marketplace Standard empowers brands and creatives to create marketplaces that they own

SOLANA BEACH, Calif., March 24, 2022 (GLOBE NEWSWIRE) — Holaplex, a community of creator-owned NFT storefronts, today announced a full-stack, open-source marketplace standard to replace the closed-source, corporate-controlled markets on Solana. Using the Holaplex NFT Marketplace Standard, any brand, collective, DAO or individual can provide the full NFT marketplace experience unique to their brand, curation, values or strategy.

The Holaplex NFT Marketplace Standard enables creators to easily launch branded, feature-complete marketplaces with no coding required. The Holaplex Marketplace Standard comes with built-in access to the Holaplex Indexer that populates all Solana NFTs in one place simply by adding the creator’s wallet address. Once added, collectors can easily sort by attributes and collections from their favorite creatives.

The Holaplex NFT Marketplace Standard utilizes Metaplex‘s Auction House Contract, an escrow-less listing system. This means NFTs stay in the owner’s wallet until sold and do not require the NFT to move into a smart contract vault. Collectors can make and accept offers or list at a fixed price.The company’s open source and on-chain model gives full custody and control to the marketplace operator. Creators not only open their NFTs but finally have ownership of the platform their NFTs and collectors operate on.

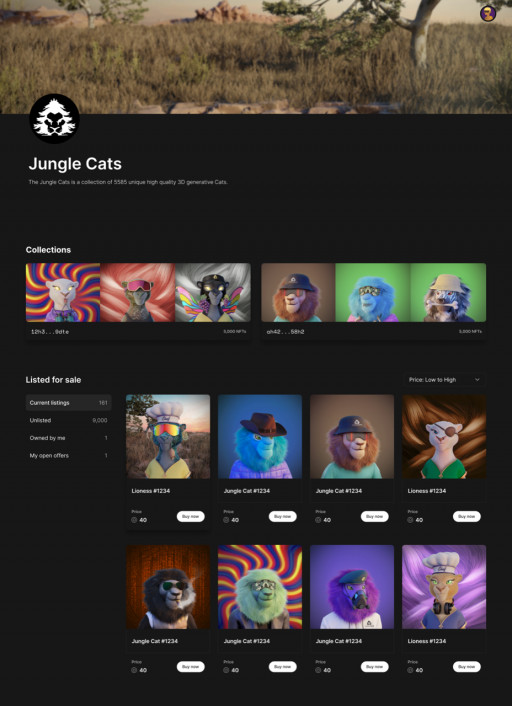

“At Jungle Cats, we believe 100% creator-owned, open-source powered marketplaces are the future of NFTs on Solana. By using the Holaplex Marketplace Standard, we have unlocked limitless potential for our project and community thanks to the open, non-extractive ethos the platform offers. As one of the most popular NFT projects on Solana we felt compelled to step forward and encourage every creator, collective and community to support the Holaplex Marketplace Standard.” – WAGMI KI, Founder of Jungle Cats

During the public beta, anyone interested in launching their own feature-complete NFT marketplace on Solana should join the Holaplex Community Discord.

“The Holaplex NFT Marketplace Standard was created in response to community demand to put an end to extractive secondary market practices and give creators open-source tools to control their destiny. Community members have full control over their collections; this includes setting transaction fees.” – Alex Kehaya, co-founder of Holaplex Community.

Today both NFT creators and collectors are overwhelmed with an onslaught of NFTs that are either irrelevant, directly competitive, or grossly derivative. The Holaplex Marketplace Standard addresses the brand confusion and puts control back in the hands of NFT creators rather than centralized, closed-source marketplaces.

About Holaplex, Inc.

Holaplex, Inc. is the original provider of open-source software to the Holaplex Community of NFT creators and collectors. We are building a future that is decentralized, open sourced and permissionless for the world’s top creatives. Holaplex enables users to mint, list, and purchase NFTs on the Solana Blockchain without needing to code. As an alternative to closed-source, privately-owned NFT platforms, the decentralized open-source Holaplex platform supports a community of users empowered to govern themselves and design flexible solutions.

Find us on Twitter, Discord and Instagram.